Why Tesla Can Program Its Cars to Break Road Safety Laws

Thousands of Teslas are now being equipped with a feature that prompts the car to break common traffic laws — and the revelation is prompting some advocates to question the safety benefits of automated vehicle technology when unsafe human drivers are allowed to program it to do things that endanger other road users.

In an October 2021 update its deceptively named “Full Self Driving Mode” beta software, the controversial Texas automaker introduced a new feature that allows drivers to pick one of three custom driving “profiles” — “chill,” “average,” and “assertive” — which moderates how aggressively the vehicle applies many of its automated safety features on U.S. roads.

The rollout went largely unnoticed by street safety advocates until a Jan. 9 article in The Verge, when journalist Emma Roth revealed that putting a Tesla in “assertive” mode will effectively direct the car to tailgate other motorists, perform unsafe passing maneuvers, and roll through certain stops (“average” mode isn’t much safer). All those behaviors are illegal in most U.S. states, and experts say there’s no reason why Tesla shouldn’t be required to program its vehicles to follow the local rules of the road, even when drivers travel between jurisdictions with varying safety standards.

“Basically, Tesla is programming its cars to break laws,” said Phil Koopman, an expert in autonomous vehicle technology and associate professor at Carnegie Mellon University. “Even if [those laws] vary from state to state and city to city, these cars knows where they are, and the local laws are clearly published. If you want to build an AV that drives in more than one jurisdiction and you want it to follow the rules, there’s no reason you can’t program it up to do that. It sounds like a lot of work, but this is a trillion-dollar industry we’re talking about.”

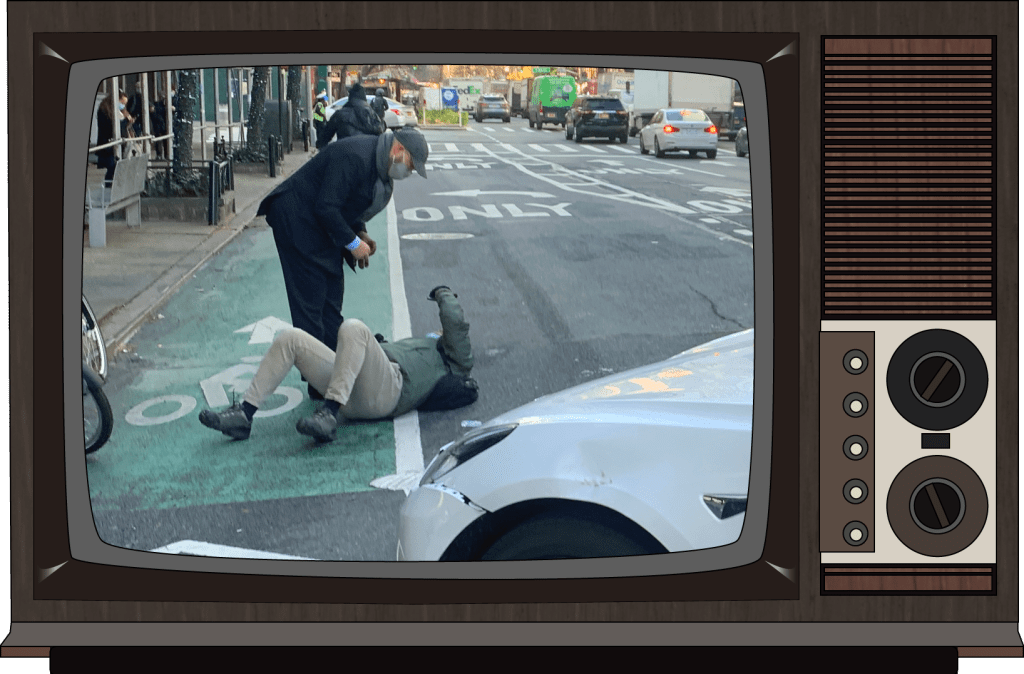

Video description: A Tesla Vlogger demonstrates the “chill,” “average,” and “assertive” profiles on Tesla’s Full Self Driving beta software. At the 2:00 minute mark, the driver praises the “assertive” mode for automatically steering away from a cyclist, but admits that he “might have slowed down just a little bit” from its automated 40 mile per hour speed. At the 8:48 minute mark, the “assertive” car illegally enters an intersection midway through a yellow light, while the other two modes are able to safely perform a complete stop.

Tesla fans were quick to defend the new Full Self Driving features, pointing out that when when the company says its cars will perform rolling stops in “assertive” and “average” modes, it probably “doesn’t mean stop signs, but optional stops, such as pulling out of a driveway or parking lot,” as one fan blogger noted.

The problem, though, is that even parking lot stops aren’t actually optional, and failing to complete them can have deadly consequences. The National Safety Council estimates that 500 people die and 60,000 are injured in vehicle crashes in U.S. parking lots and garages every year, many of whom are pedestrians or motorists on their way to and from their cars. Moreover, Full Self Driving beta testers have recorded numerous videos of their Teslas rolling through stop signs and red lights — and experts say that it matters that the company is building tech that makes it easy to ignore stopping laws, even if not every Full Self Driving fail will result in an injury.

“Of course, some people will say, ‘Well, a rolling stop is OK if no one’s there,” said Koopman. “But personally, I think it’s still a bad idea for a lot of reasons. One is that you’re assuming the car will actually see all the vulnerable road users who could be hurt. It can’t; what if someone pops out from behind the bush? What if there are defects in your own vehicle software? Full Self Driving is still in beta; we know there are defects. Why on earth is [Tesla] pushing the boundaries of the law when we already know it’s not perfect? How do you develop trust with the public while you’re doing that? How do you develop trust with regulators?”

Those regulators, though, have so far had a hard time reining in AV companies that fail to prevent their customers from flouting roadway rules — never mind ones like Tesla, which actively enable their customers who would use automation in service of their personal convenience and speed, rather than to enhance collective safety.

That’s in part because, by and large, U.S. law tends to favor penalizing individual drivers for breaking the law, rather than penalizing car manufacturers whose vehicle designs make breaking those laws easy. No automobile company has ever been prosecuted for installing an engine that can propel a car more than 100 miles an hour, for instance, even though such speeds aren’t legal on any road in America; nor have companies been held accountable when their customers use cruise control to speed, even though technology to automatically stop all speeding has existed for decades.

Koopman adds that advanced vehicle automation falls into an even murkier gray area between federal and state roadway laws, neither of which were designed to assign liability when a human driver and a vehicle computer are sharing the burden of executing more complex driving tasks.

“The way it’s worked, historically, is that [the National Highway Traffic Safety Administration] is in charge of vehicle safety, and individual states have been in charge of the safety of human drivers,” he said. “But it gets complicated when the car starts assuming the responsibility of the human driver…Stopping at a stop sign is not a federal law; it’s a state law. Sure, NHTSA can say your car design is unsafe because it’s breaking a lot of state laws, but they can’t enforce those laws themselves.”

Many states are pursuing legislation that would hold AV companies accountable for deploying vehicles that can violate roadway rules with the touch of a button — though others, under pressure from industry lobbyists, are passing bills aimed at encouraging AV testing on public roads, and to shield their manufacturers from legal action when their safety software fails. NHTSA, meanwhile, is initiating several investigations into Tesla for vehicle safety concerns, though it’s unlikely that “assertive mode” will prompt a recall anytime soon.

Given that regulatory landscape and the soaring stock prices that have accompanied the roll out of “Full Self Driving,” Koopman says Tesla doesn’t have much financial or legal incentive to make its drivers “chill” out behind the wheel. But that doesn’t mean the company doesn’t have an ethical responsibility to do it.

“If people behave dangerously while using your product, and you know they predictably do this, it’s not a question of whether you should do something about it: it’s a question of how much you should do,” added Koopman. “Doing nothing is not acceptable.”

Streetsblog has migrated to a new comment system. New commenters can register directly in the comments section of any article. Returning commenters: your previous comments and display name have been preserved, but you'll need to reclaim your account by clicking "Forgot your password?" on the sign-in form, entering your email, and following the verification link to set a new password — this is required because passwords could not be carried over during the migration. For questions, contact tips@streetsblog.org.